Robotics Engineer Interview Questions: Preparation Guide for 2026

Robotics interviews follow predictable patterns—if you know which pattern applies to your target company. One minute you’re solving a LeetCode-style coding problem, the next you’re whiteboarding a SLAM pipeline or explaining how you’d tune a PID controller. This dual requirement—software engineering fundamentals plus domain expertise—is what catches people off guard.

What gets asked depends heavily on the company type. Big tech emphasizes algorithms and ML. Robotics specialists want deep domain knowledge. Startups care about shipping. Industrial automation focuses on safety and reliability.

This guide focuses on global interview patterns with emphasis on US tech hubs, where most robotics companies are based.

What Robotics Interviews Actually Test

Robotics interviews evolved from academic-style oral exams to structured technical assessments. The difference from pure software roles is the dual requirement: you need software engineering fluency AND hardware understanding.

Four categories appear consistently across robotics companies:

Coding fundamentals form the foundation. Expect data structures and algorithms, but often with robotics-flavored problems. A linked list question might frame itself around path planning; trees may appear as coordinate transforms.

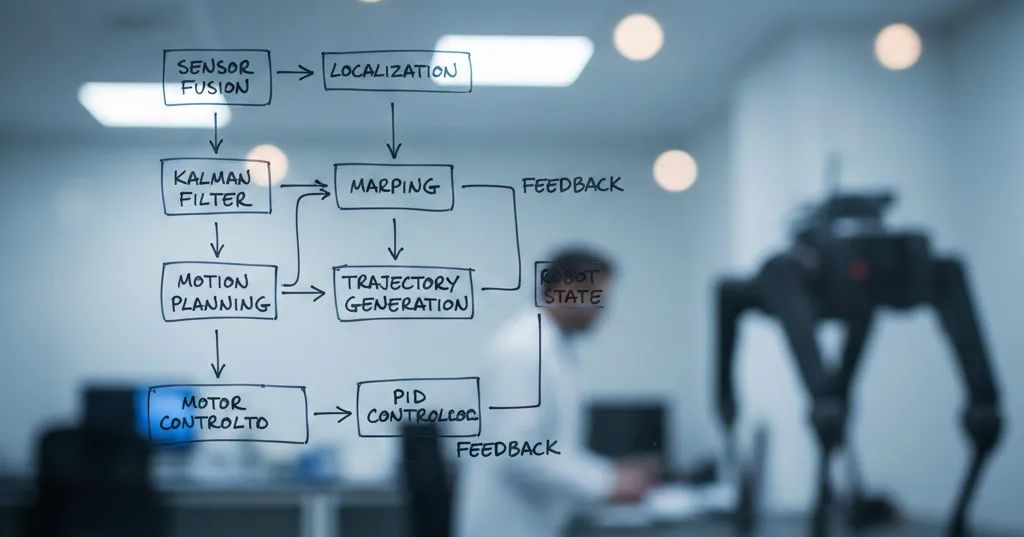

Domain technical knowledge separates robotics candidates from general software engineers. Control systems, SLAM, perception, kinematics—these are the filters. You’ll explain trade-offs between Kalman filters and particle filters, discuss coordinate frame transformations, or analyze why a controller oscillates.

System design tests architecture thinking. How would you structure a perception pipeline? Design a fault-tolerant navigation stack? Scale a fleet management system? The emphasis is on real-world constraints: latency, reliability, safety.

Behavioral questions evaluate how you work. Robotics is inherently multidisciplinary—you’ll collaborate with mechanical engineers, electrical engineers, domain experts. Interviewers probe your approach to failure, cross-team communication, and safety mindset.

The weight of each category varies by company and role. A controls-focused role at Boston Dynamics emphasizes dynamics and control theory. A perception role at an autonomous vehicle company dives into computer vision and ML infrastructure. Research the company’s technical emphasis before interviewing.

Interview Formats by Company Type

Company-specific patterns are a major gap in most guides. The format and emphasis differ significantly:

| Company Type | Technical Emphasis | Interview Format | Question Focus |

|---|---|---|---|

| Big Tech (Amazon, Google, Tesla) | ML, perception, large-scale systems | 5-6 rounds: coding screens, technical deep-dives, behavioral, system design | LeetCode-style coding, ML architecture, scalability |

| Robotics Specialists (Boston Dynamics, iRobot) | Domain depth, dynamics, controls | 3-4 rounds: technical deep-dives, presentation, practical coding | Dynamics, control theory, SLAM, locomotion |

| Startups | Practical skills, shipping | 2-3 rounds: focused technical, culture fit | ”What would you build in 30 days?” practical problems |

| Industrial Automation (John Deere, Universal Robots) | Safety, reliability, deployment | 3-4 rounds: technical scenarios, standards knowledge | Safety protocols, fault tolerance, real-world deployment |

Big Tech runs structured processes similar to general software roles, but with domain flavor. Amazon’s Leadership Principles frame behavioral questions. Tesla combines systems design with hands-on coding. The coding rounds lean on algorithms and data structures—prepare like a software engineer interview, then layer on robotics knowledge.

Robotics Specialists dive deep into domain specifics. Boston Dynamics asks about legged locomotion, contact dynamics, and real-time control. iRobot focuses on navigation, SLAM, and consumer product constraints. Presentations are common—expect to explain a past project in detail.

Startups move faster and prioritize practical impact. Fewer rounds, more emphasis on “can you ship?” Questions often frame as real problems: “Our robot gets stuck in doorways—how would you approach this?” Culture fit matters significantly—you’ll wear multiple hats.

Industrial Automation emphasizes safety and reliability. Functional safety standards (ISO 13849, IEC 61508) may appear in conversation. Deployment constraints—harsh environments, maintenance requirements, operator interfaces—are part of the discussion.

Research your target company’s pattern. LinkedIn connections, company blogs, and Glassdoor interviews provide clues. Adjust your preparation emphasis accordingly.

Skills to Prioritize

Before diving into specific questions, know what fundamentals matter. Python and C++ are universal across robotics engineering roles—master these first. Beyond that, research your target domain: controls roles need dynamics and state estimation, perception roles need computer vision and machine learning, planning roles need algorithms and optimization.

What interviewers actually look for is technical reasoning. Why did you choose A* over RRT? When would particle filter SLAM outperform graph-based approaches? The reasoning matters more than the right answer.

Searching recommended jobs...

Coding Questions with Solutions

Here are working solutions to problems that appear frequently in robotics interviews.

Python: PID Controller

Question: “Implement a PID controller class for motor speed control.”

PID control is foundational—the most common control theory coding question. Understanding each term matters more than the implementation itself.

class PIDController:

def __init__(self, kp, ki, kd, setpoint=0):

self.kp = kp # Proportional gain

self.ki = ki # Integral gain

self.kd = kd # Derivative gain

self.setpoint = setpoint

self.integral = 0

self.prev_error = 0

def compute(self, measurement, dt):

error = self.setpoint - measurement

# Proportional term: respond to current error

p_term = self.kp * error

# Integral term: accumulate past error

self.integral += error * dt

i_term = self.ki * self.integral

# Derivative term: predict future error based on rate of change

# Note: In production, take derivative of measurement to avoid "derivative kick" on setpoint changes

derivative = (error - self.prev_error) / dt

d_term = self.kd * derivative

self.prev_error = error

return p_term + i_term + d_termInterviewers look for: understanding each term (P responds now, I accumulates, D predicts), awareness of integral windup (not implemented above—ask about it), and derivative kick on setpoint changes. The senior answer: in production systems, compute derivative on the measurement (not error) to avoid spikes when setpoint changes. A strong answer discusses tuning strategies and when each term matters.

Python: Odometry Computation

Question: “Compute robot pose from wheel encoder ticks for a differential drive robot.”

import math

class DifferentialDriveOdometry:

def __init__(self, wheel_radius, track_width):

self.wheel_radius = wheel_radius

self.track_width = track_width

self.x = 0.0

self.y = 0.0

self.theta = 0.0

def update(self, left_ticks, right_ticks, ticks_per_rev):

# Convert ticks to distance

left_dist = 2 * math.pi * self.wheel_radius * (left_ticks / ticks_per_rev)

right_dist = 2 * math.pi * self.wheel_radius * (right_ticks / ticks_per_rev)

# Compute change in pose

delta_dist = (right_dist + left_dist) / 2

delta_theta = (right_dist - left_dist) / self.track_width

# Update pose using midpoint approximation

self.x += delta_dist * math.cos(self.theta + delta_theta / 2)

self.y += delta_dist * math.sin(self.theta + delta_theta / 2)

self.theta += delta_theta

return (self.x, self.y, self.theta)Key interview points: why divide by 2 for theta (midpoint approximation for better accuracy), what errors accumulate (wheel slip, uneven surfaces), and how you’d handle tick overflow.

Python: A* Path Planning

A* is the standard path planning algorithm question. The key insight: f = g + h, where g is cost from start and h is estimated cost to goal.

import heapq

from collections import defaultdict

def a_star_search(graph, start, goal, heuristic):

"""

graph: dict mapping node -> [(neighbor, cost), ...]

heuristic: function(node, goal) -> estimated cost

"""

open_set = [(0, start)] # (f_score, node)

came_from = {}

g_score = defaultdict(lambda: float('inf'))

g_score[start] = 0

while open_set:

current_f, current = heapq.heappop(open_set)

if current == goal:

# Reconstruct path

path = []

while current in came_from:

path.append(current)

current = came_from[current]

path.append(start)

return path[::-1]

for neighbor, cost in graph[current]:

tentative_g = g_score[current] + cost

if tentative_g < g_score[neighbor]:

came_from[neighbor] = current

g_score[neighbor] = tentative_g

f_score = tentative_g + heuristic(neighbor, goal)

heapq.heappush(open_set, (f_score, neighbor))

return None # No path foundInterviewers want to see if you understand when A* is appropriate (known map, discrete search) versus sampling-based methods like RRT (high-dimensional spaces, continuous). Discuss heuristic choice—Manhattan distance for grids, Euclidean for continuous spaces.

C++: ROS 2 Publisher Node

ROS 2 has largely replaced ROS 1, which reached EOL in 2025. Expect questions about QoS profiles, lifecycle nodes, and the DDS middleware.

#include <rclcpp/rclcpp.hpp>

#include <std_msgs/msg/string.hpp>

#include <chrono>

using namespace std::chrono_literals;

class RobotPublisher : public rclcpp::Node {

public:

RobotPublisher() : Node("robot_publisher") {

// QoS: RELIABLE ensures message delivery, BEST_EFFORT prioritizes speed

auto qos = rclcpp::QoS(rclcpp::KeepLast(10))

.reliable()

.durability_volatile();

publisher_ = this->create_publisher<std_msgs::msg::String>(

"robot_status", qos);

timer_ = this->create_wall_timer(

500ms, std::bind(&RobotPublisher::publish_callback, this));

}

private:

void publish_callback() {

auto message = std_msgs::msg::String();

message.data = "Status: operational";

RCLCPP_INFO(this->get_logger(), "Publishing: '%s'", message.data.c_str());

publisher_->publish(message);

}

rclcpp::Publisher<std_msgs::msg::String>::SharedPtr publisher_;

rclcpp::TimerBase::SharedPtr timer_;

};

int main(int argc, char** argv) {

rclcpp::init(argc, argv);

rclcpp::spin(std::make_shared<RobotPublisher>());

rclcpp::shutdown();

return 0;

}Key talking points: QoS settings (RELIABLE vs BEST_EFFORT for control vs telemetry), the transition from ROS 1 master to ROS 2 DDS discovery, and lifecycle nodes for managed systems. Senior candidates should mention that production systems use MultiThreadedExecutor to handle callbacks in parallel, not the simple blocking rclcpp::spin shown here.

Technical Domain Questions by Category

Control Systems & Dynamics

“Explain PID control and how you’d tune the gains.”

Proportional-Integral-Derivative control is the workhorse of robotics. P responds to current error—high Kp causes fast response but overshoot. I accumulates past error—eliminates steady-state error but can cause windup. D predicts future error—damps oscillation but amplifies noise.

Tuning strategy: Start with Kp=0, Ki=0, Kd=0. Increase Kp until the system oscillates, then back off 20%. Add Kd to reduce oscillations. Add Ki slowly to eliminate steady-state error. Ziegler-Nichols is a classic method, but manual tuning with system understanding works better in practice.

“What’s the difference between position, velocity, and torque control?”

Position control commands the robot to reach a location—common for manipulators reaching waypoints. Velocity control specifies speed—used for mobile robots and smooth motion. Torque (force) control directly regulates actuator effort—essential for interaction, impedance control, and safety.

The hierarchy: torque control is lowest level, velocity builds on torque, position on velocity. Many systems cascade them—outer position loop, inner velocity loop, innermost torque/current loop. This low-level control is often implemented on embedded systems.

“Describe the Kalman Filter and when to use EKF vs UKF.”

The Kalman Filter is an optimal recursive estimator for linear systems with Gaussian noise. Two steps: prediction (propagate state and covariance using motion model) and update (correct using measurements, compute Kalman gain).

Extended Kalman Filter (EKF) linearizes nonlinear models using Taylor expansion—works well for mild nonlinearities. Unscented Kalman Filter (UKF) uses sigma points to propagate distributions—handles stronger nonlinearities better and doesn’t require Jacobians. Use EKF for computational efficiency and moderate nonlinearity; UKF for highly nonlinear systems like complex vision-based estimation.

“How would you fuse accelerometer and gyroscope data for orientation?”

A complementary filter is the practical answer: accelerometer gives accurate tilt at low frequencies but is noisy; gyroscope gives good rate information but drifts. Combine them: high-pass filter the gyroscope (short-term accuracy), low-pass filter the accelerometer (long-term reference), and sum. For production systems, use a full attitude estimator like Madgwick or Mahony filter.

Kinematics & Dynamics

“Explain forward vs inverse kinematics for a robotic arm.”

Forward kinematics (for serial arms) computes end-effector pose from joint angles—deterministic, single solution. Note: parallel manipulators like Stewart platforms make FK the hard problem. Inverse kinematics computes joint angles for desired end-effector pose—can have multiple solutions, no solution, or infinite solutions. IK is harder; common approaches include analytical solutions (for specific arm geometries), Jacobian-based methods (damped least squares for singularity handling), and numerical optimization.

“What is the Jacobian matrix used for in robotics?”

The Jacobian relates joint velocities to end-effector velocities: v = J(q) × q̇. Used for velocity kinematics (map joint space to task space), singularity analysis (J loses rank), and resolved motion rate control. In IK, Jacobian inverse methods compute joint velocities to achieve desired end-effector motion.

SLAM & Navigation

“Explain the SLAM problem and its key components.”

Simultaneous Localization and Mapping: a robot builds a map of an unknown environment while tracking its position within that map. The chicken-and-egg problem—you need the map to localize, and your position to build the map.

Key components: Motion model predicts pose based on odometry. Observation model predicts sensor measurements given pose. Data association matches observations to map features. Loop closure detects when the robot revisits locations, correcting accumulated drift.

“Compare particle filter SLAM vs graph-based SLAM.”

Particle filter SLAM (classical approach like Gmapping) uses Monte Carlo sampling—each particle is a possible robot pose and map. Works well for small environments but scales poorly. Advantages: easy to understand, handles non-Gaussian noise. Disadvantages: computational cost explodes in large spaces. This approach is now considered legacy for most applications.

Graph-based SLAM (like GTSAM, Cartographer) poses SLAM as optimization: nodes are robot poses, edges are constraints from odometry and observations. Solve via least squares optimization. Scales better, handles loop closures elegantly, but assumes Gaussian noise. Most modern systems use graph-based approaches.

“How does loop closure work in visual SLAM?”

Loop closure detects when the robot revisits a location, enabling drift correction. Visual approaches compare current scene against a database of past views using place recognition techniques: bag-of-words models (VLAD, Fisher vectors), global descriptors (NetVLAD), or direct feature matching. When a loop is detected, the optimizer adds a constraint between current and past poses, propagating corrections throughout the trajectory.

“How do you compute odometry from wheel encoders?”

For differential drive: left_dist = 2πr × (left_ticks / ticks_per_rev), same for right. Compute delta_dist = (left + right) / 2 and delta_theta = (right - left) / track_width. Update pose: x += delta_dist × cos(θ + delta_theta/2), y similarly, θ += delta_theta. Key error sources: wheel slip (especially during turns), uneven floors, tire deformation. Advanced systems add visual odometry or IMU to correct drift.

Perception & Computer Vision

“Compare SIFT, SURF, and ORB feature detectors.”

SIFT (Scale-Invariant Feature Transform) is rotation and scale invariant with robust matching. Patent-encumbered (historically), computationally heavy. Excellent for matching across views but slow for real-time.

SURF (Speeded-Up Robust Features) accelerates SIFT using integral images—faster but still heavy. Also patent issues.

ORB (Oriented FAST and Rotated BRIEF) is the modern choice: FAST corner detector with rotation-aware BRIEF descriptors. Patent-free, extremely fast, binary descriptors enable efficient matching. ORB is standard for real-time robotics applications like visual odometry.

“Explain stereo vision depth estimation pipeline.”

Stereo depth estimation follows a pipeline: Rectification aligns images so corresponding points share the same row. Matching finds corresponding pixels between left and right images using block matching or semi-global matching. Triangulation computes depth from disparity: depth = (baseline × focal) / disparity. Post-processing applies bilateral filtering to clean noise while preserving edges.

Key challenge: textureless regions lack features for matching. Active stereo adds projected patterns to solve this. Structured light (like early Kinect) uses different principles but similar depth-from-disparity concepts.

“How do you balance classical planning with learning-based policies?”

By 2026, robotics sits at the intersection of classical approaches and end-to-end learning. Classical methods (behavior trees, A*, MPC) offer verifiability, interpretability, and safety guarantees. Learning-based methods (end-to-end policies, VLA models, foundation models) excel at perception-rich, unstructured environments where hand-crafted rules fail.

The strong answer: use classical methods for safety-critical constraints and fallback behaviors, layer learning-based policies for perception and decision-making in well-understood domains. Sim2Real transfer—training in simulation (Isaac Sim, MuJoCo) with domain randomization—is the standard approach for bridging the gap.

ROS/ROS2 Fundamentals

“Explain publisher-subscriber vs service-client communication patterns.”

Publisher-subscriber is asynchronous messaging—publishers send messages on topics, subscribers receive them. Many-to-many communication, decoupled. Ideal for sensor streams (camera images, lidar scans) where data flows continuously.

Service-client is synchronous request-response—client sends request, server provides reply. One-to-one, blocking. Use for transactions: “save map,” “reset localization,” “get robot pose.”

“What are QoS profiles in ROS 2 and when would you use each?”

Quality of Service policies control message delivery:

RELIABLE: Guaranteed delivery, acknowledgments, retransmission. Use for control commands, mission-critical data.

BEST_EFFORT: Fire-and-forget, drop old messages. Use for high-frequency sensor data where latency matters more than completeness—latest state is best.

History depth: Keep last N messages for late-joining subscribers. Configure based on downstream needs.

“How do you handle coordinate frame transforms (TF tree)?”

The TF tree maintains relationships between coordinate frames: base_link, camera, laser, world, odom. Broadcast transforms for moving joints, static transforms for fixed sensors. Lookup transforms to convert points between frames. Common pitfall: extrapolation—asking for future transforms causes errors. Buffer size and timeout settings matter for real systems.

Behavioral Questions That Actually Matter

Behavioral questions are universal, but robotics brings specific contexts. Use the STAR format: Situation, Task, Action, Result.

Project Deep Dive: “Tell me about the most challenging robotics project you’ve worked on.”

Focus on a project with genuine technical depth. Explain the problem, your specific contributions, technical obstacles, and how you solved them. Interviewers probe for hands-on experience versus coursework.

Failure & Learning: “Describe a robot failure you debugged—what was your process?”

Robotics failures are multidisciplinary: software bugs, sensor miscalibration, mechanical issues, integration problems. Walk through your systematic debugging approach. The best answers isolate root cause through structured experimentation, not guessing.

Multidisciplinary Collaboration: “How did you handle conflicting requirements between mechanical, electrical, and software teams?”

Robotics requires trade-offs. Maybe mechanical wants a larger battery (affects weight and dynamics), electrical wants compact layout (thermal issues), software needs more compute (power budget). Strong answers acknowledge constraints and describe collaborative problem-solving.

Safety Mindset: “Tell me about a time you identified a potential safety issue.”

Safety is non-negotiable in robotics. The best answers describe proactive risk identification and mitigation. Examples: adding emergency stops, implementing watchdog timers, validating sensor redundancy, catching a dangerous bug before deployment.

Sim2Real Gap: “Describe something that worked in simulation but failed on hardware. How did you debug it?”

This is a universal robotics experience. Simulation friction doesn’t exist, sensors are perfect, physics are ideal. The real world has latency, sensor noise, mechanical tolerances, unexpected interactions. Strong answers walk through systematic debugging: identifying the discrepancy, forming hypotheses (sensor calibration? timing issue? control loop?), testing fixes, validating on hardware.

Level-Specific Interview Differences

Interviews emphasize different aspects based on seniority.

Entry Level (0-2 years)

Focus: Fundamentals and learning ability. Entry-level coding questions emphasize clean, readable code over optimization. Behavioral: “How do you learn new technologies?” “Tell me about a project where you had to teach yourself something new.”

Mid-Level (2-5 years)

Focus: System design and ownership. Expect architecture questions: “Design a warehouse robot navigation stack.” Behavioral: “Tell me about a technical decision you drove.” “How do you handle competing technical approaches?”

Senior (5+ years)

Focus: Architecture and cross-team influence. Less coding implementation, more system design. Behavioral: “How do you evaluate competing technical approaches?” “Describe a time you mentored junior engineers.”

Lead/Principal (10+ years)

Focus: Technical direction and people management. Robotics leadership roles emphasize strategy over implementation. Design reviews, strategic decisions. Behavioral: “How do you shape technical strategy?” “Describe influencing organizational technical direction.”

Searching recommended jobs...

4-Week Preparation Roadmap

| Week | Primary Focus | Daily Practice | Key Resources |

|---|---|---|---|

| Week 1 | Coding fundamentals | 1 LeetCode problem/day (graphs, trees, arrays) | LeetCode, Cracking the Coding Interview |

| Week 2 | Domain knowledge | Read + explain concepts aloud | Probabilistic Robotics, MIT OpenCourseWare |

| Week 3 | ROS/ROS2 + systems | Build nodes, whiteboard designs | ROS 2 tutorials, company research |

| Week 4 | Mock interviews | 2-3 practice sessions | Peer practice, refine STAR stories |

Week 1: Fundamentals Assessment

Assess your coding baseline with LeetCode easy/medium problems—focus on graphs, trees, arrays. Brush up on linear algebra (matrices, transformations) and probability basics (Bayes, Gaussian distributions). Practice one coding problem daily.

Week 2: Domain Knowledge Deep Dive

Focus areas based on your target role. Controls roles: dynamics, control theory, state estimation. Perception roles: computer vision, ML basics. Planning roles: path planning algorithms, search. Read relevant chapters from “Probabilistic Robotics” (Thrun). Practice explaining concepts aloud—you’ll whiteboard in interviews.

Week 3: ROS/ROS2 & System Design

Build a ROS 2 node from scratch—publisher, subscriber, maybe a simple service. Design 2-3 system architectures on paper: warehouse robot navigation stack, sensor fusion pipeline, fleet management system. Practice whiteboard explanations. Research your target companies.

Week 4: Mock Interviews & Refinement

Schedule 2-3 mock interviews with peers or platforms like instict.ai, which simulates real interviews based on your target role and company with instant feedback. Prepare 3 STAR stories for behavioral questions. Refine weak areas identified in mocks. Review your project work—you’ll dive deep on at least one.

Career changers may need 6-8 weeks rather than four. Adjust based on your starting point.

Resources for Continued Learning

Books: “Probabilistic Robotics” (Thrun et al.)—the SLAM bible. “Modern Robotics” (Lynch & Park)—mechanics, planning, control. Both are rigorous and worth owning.

Courses: Coursera Robotics Specialization (UPenn)—solid fundamentals. Udacity Robotics Nanodegree—project-based, good for portfolios.

Practice: LeetCode for coding fundamentals. ROS 2 official tutorials for middleware practice. instict.ai for simulated interviews with instant feedback. Simulation: NVIDIA Isaac Sim and MuJoCo for Sim2Real workflows (industry standard for 2026), Gazebo for classic robotics testing.

Community: IEEE Robotics and Automation Society (RAS). arXiv robotics preprints for latest research. GitHub repositories for real code examples.

What to Ask the Interviewer

Good questions signal engagement and insight.

“What’s the biggest technical challenge your team is currently working on?” Reveals real problems and technical depth.

“How does your team balance research versus production engineering?” Some teams prototype new approaches; others ship production code. The answer helps you understand the role.

“What does success look like in this role in the first 6 months?” Sets expectations and shows you’re thinking about impact.

“How does the team approach safety and reliability in your robotics systems?” Safety-aware candidates stand out. This question demonstrates you understand what matters.

Common Questions About Robotics Interviews

What coding languages are used in robotics?

What is the difference between ROS and ROS2?

How long does it take to prepare for a robotics interview?

What are the basics asked in robotics interviews?

What are the 3 main components of a robot?

Conclusion

Robotics interviews follow patterns, but you have to know which pattern applies to your target company. Big tech wants algorithms and ML. Specialists want domain depth. Startups want shipping speed. Industrial wants safety and reliability. Research first, then prepare accordingly.

The candidates who stand out can explain trade-offs and technical reasoning, not just recite answers. Practice explaining your thinking aloud—you’ll be whiteboarding. Build projects that force you to debug real systems. Robotics is hands-on; your best interview stories will come from things that broke and how you fixed them.

Share this guide on